There are hundreds (maybe thousands) of databases available. Why?

The real-world problem whose data is captured by the application needs to be represented by a model. Since the 1980s, most databases have adopted a relational model, which is associated with SQL as the query language. The 2010s saw the rise of NoSQL databases, which follow a simpler key-value model and thus are more versatile and easily scalable.

Under the hood, the database needs to store and retrieve data, and the internals of how this is handled are important to developers because they determine whether it can handle the workload. For example, some applications interact with individual records, whereas others scan many records at a time and perform aggregations. Furthermore, the scale and redundancy requirements of most workloads is such that they require a database that spans multiple machines, which further expands the option space with decisions like sharding and replication.

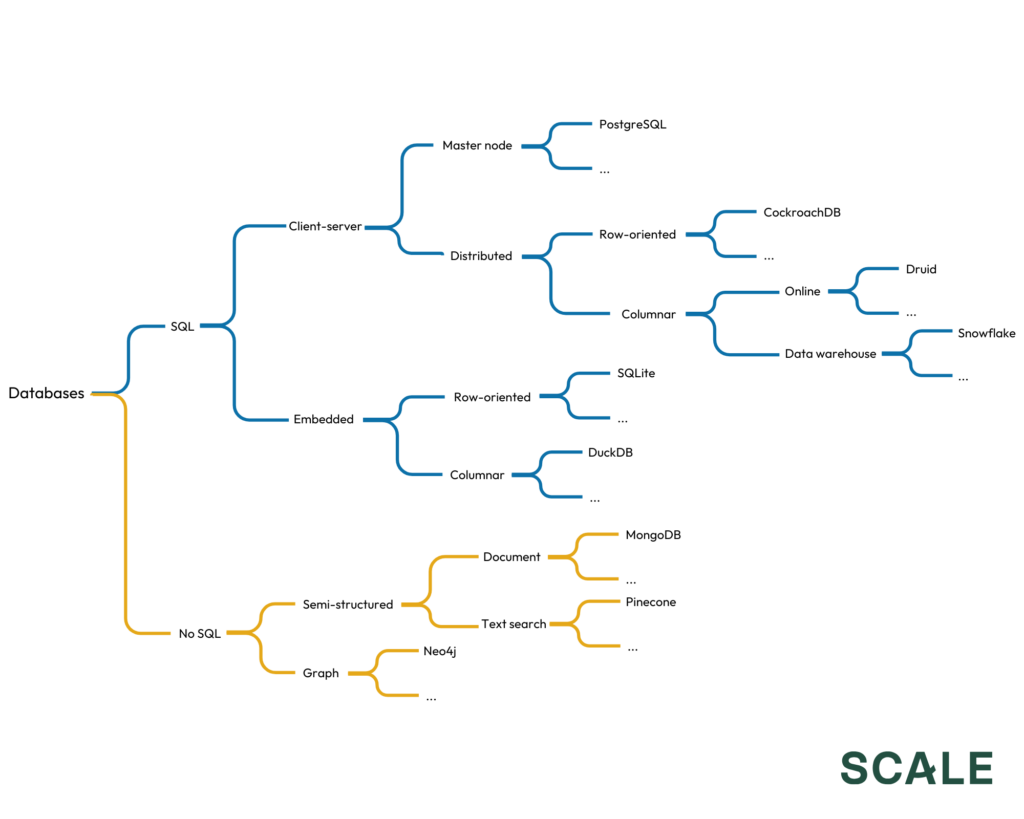

Within each category, there are also multiple implementations that create variants of the same model and give rise to many products. Here’s a (not mutually exclusive or collectively exhaustive) attempt at presenting the complex taxonomy:

The elephant in the room

While the different architectures have led to this level of fragmentation, databases share foundational elements. It is often possible to cater to an adjacent category by adding the option for a different database model and allowing users to swap the implementation through configuration. For example, one might add vector support to a relational database or enable an OLTP database to create tables that use columnar storage for OLAP. For something as complex and mission-critical as a database, the clear benefit of convergence is clear: more users means more hardening and, if the software is open source, more contributions.

Among all the databases, one is starting to emerge as a unifying force: Postgres.

Postgres has been around for over two decades and, prior to this trend, was broadly used across most organizations as the go-to relational OLTP database. Developers trust and (importantly) have specific operational knowledge of Postgres. Besides, there is a broad ecosystem of tools to work with Postgres.

Secondly, the project has a permissive open-source license and governance is highly decentralized. This fosters an ecosystem where developers can freely use Postgres as a foundation to build derivatives without fear of being cut off by a vendor that controls the main project.

Finally, Postgres makes it simple to build incremental value by offering support for extensions. New functionality might sit outside of Postgres (as a “sidecar”), but oftentimes the behavior of Postgres itself needs to be modified. Through the use of extensions, developers don’t need to fork the main project to add features, which simplifies development and retains the core activity and contributor base on the main project.

Problems and solutions

The community has been busy building. What started with a couple of projects that were building on top of Postgres is now a major ecosystem solving for almost every possible use case that special-purpose databases have traditionally tackled:

- Serverless: There is a limited ability to isolate tenants in a Postgres database. By adding features like quotas, it is possible to share the infrastructure without noisy neighbors. This in turn allows organizations (or vendors) to offer scale-to-zero (serverless) Postgres.

- Distributed: Despite some advances in recent versions of Postgres, it is still largely a single-node database. Incorporating distributed database features to Postgres provides benefits like high availability (which improves uptime) and sharding (which reduces latency at high scale).

- Edge: Most databases, including Postgres, are designed to follow a client-server model (the client being the frontend and the server being the backend including database). Some modern applications, however, rely on local storage to do things like improve reactivity or add offline interactivity. This is not what Postgres was designed for, but with the right replication model, it can be used as the application backend and support edge applications.

- Columnar: Postgres is typically used in transactional workloads because storage is oriented in rows, i.e., values from one row are stored together which makes reading entire individual records efficient. Analytical workloads, however, often scan many records of a single column for aggregation, so making Postgres efficient for it involves storing values from the same column together.

- Document: While a SQL table can represent a key-value pair, Postgres lacks the simplicity of the key-value APIs. This gap can be bridged, enabling the existing driver for a document database to talk to Postgres (an “adapter” of sorts).

- Text search: Finding documents based on complex patterns in the text has traditionally been a capability delivered by search engines. A recent form of this type of retrieval has relied on vector embeddings, which became popular in the retrieval-augmented generation (RAG) architecture, as well as in recommendation engines. Support for all these special types of filtering can be added to Postgres, creating a new indexing model.

- Graph: Relational databases like Postgres can represent many-to-many relations, but dense networks are hard to represent and traverse. For scenarios where the data starts to resemble a graph, the ability to reason in the form of vertices and edges can be added to Postgres.

- Time series: Compared to the average Postgres table, time series datasets are particularly large and follow a pattern (timestamp plus associated data, few or no relationships). Special features like compression or retention period (for automated deletion) can significantly improve the performance of Postgres when dealing with time series data.

- Multimodal: The driver for convergence around Postgres is the use of the same underlying database, but each special-purpose variant is often offered by a different vendor who maintains the extension. When their extensions are open source, however, it is also possible to offer them in a single product from the same vendor.

We have seen a number of companies emerge to tackle these use cases:

| Serverless |

Distributed |

Edge |

| Columnar |

Document |

Text search |

| Graph |

Time series |

Multimodal |

Implications for founders

The idea of standardizing on Postgres is gaining traction in the developer community. This represents a major shift from the days of database sprawl.

Succeeding in this space will require a thoughtful response to various concerns:

- Being based on Postgres is probably not enough to compete against the incumbents. Successful startups won’t necessarily support every single feature and outperform special-purpose databases on every benchmark, but they will find other catalysts that can drive migration and target the right customer profile in their GTM.

- Despite the shared open-source Postgres foundation, a common pitfall is that monetization often involves building a managed Postgres service. The surface area to replicate a service like AWS’ RDS is massive. If every Postgres startup does this independently, there will be a lot of duplicate work.

- The licensing strategy will be key given the very open nature of Postgres. On one hand, a permissive license means that every other managed Postgres vendor can offer your product. On the other hand, a restrictive license might hinder adoption or incentivize Postgres vendors to create an open-source alternative. This risk is particularly high when the surface area of the core Postgres addition is smaller than the surface area of building a managed Postgres service.

On the other side of these concerns is a huge opportunity that makes us very excited about this space: many special-purpose databases have built billion dollar-scale businesses, so every category mentioned above is potentially staring at a massive market. To be clear, we’re not calling for an end to all other databases—some design tradeoffs might still make them superior for certain users. But we believe that, for many organizations, Postgres plus [your new amazing startup idea] will probably make the most sense. And, given that developers are already bought in on Postgres, they might ask themselves: do I really need another database?